10 Tips for Split Testing Your Landing Pages

How and Why You Should Split Test Pages

Split tests or A/B tests are experiments that test two similar variables. These variables are delivered to a randomly split audience, so businesses can understand what variable delivers the best results. You’ll need these tests to optimize the conversion rate of your landing pages.

Unfortunately, it’s common for marketers to rely on intuition when designing a call-to-action or assume that any decent conversion rate is “good enough.” That’s the wrong attitude, especially considering how quickly your consumer’s interests are changing and evolving.

If you want to speak to your consumers directly and continue to do so, frequent A/B tests focusing on maximizing conversion rates should become a staple in your marketing strategy.

How Does Split Testing Work?

To begin a split test, you must create two similar pieces of content. Both content pieces will look nearly identical, but a single variable will be changed. Your team will randomly show both versions of the content to two similar-sized audiences and analyze which one performed better.

Split Test Example: Call-To-Action (CTA) Button

Your company wants to see if a different CTA button color will prompt more conversions. Since your landing page background is green, you assume a red button will stand out because it’s a complementary color to green. You also want to test purple for the same reason.

However, red is a natural complementary color to green, whereas purple is slightly off. You assume red will win out because it pops more on your green landing page than purple.

To complete these tests, your company may use Leadfeeder, Clickmeter, or other analytic tracking tools that complement Google Analytics. This helps you populate results faster.

After completing the A/B test, you noticed that purple won out with a 78% click rate (as opposed to red at 45%). If you had gone with your gut, you would have gotten a lower conversion rate. This is likely because red psychologically tells our brain to “stop” or “be aware of the danger.”

Why Conduct A/B Tests?

The primary purpose of an A/B test is to remove potential marketing biases and assumptions. Our call-to-action example showed how color psychology could affect a customer’s buying decisions. Aesthetics, customer surveys, and questionnaires rarely trump the psyche.

With A/B tests, your audience isn’t aware they’re being tested. They can’t say “test A is better” because they aren’t seeing both tests side by side. This makes it easier for companies to develop strategies based on actions, not opinions, as actions speak louder than words.

The right A/B tests can help you obtain actionable insights that you can apply across your landing pages.

With an optimized landing page, your company benefits from the following:

-

Low Bounce Rate

A high bounce rate will tank your sales. Changing the page’s font, color pallet, featured images, or user experience can help retain more visitors.

-

High Conversion Rate

A 45% conversion rate is reasonable, but a 78% rate is even better. By testing out different features, you can eventually maximize your conversion rate.

-

Low Cart Abandonment

Shopping cart abandonment is common but can be reduced with A/B testing. For example, lower shipping costs often lead to lower abandonment.

-

Increased Web Traffic

Different landing page titles and keywords could bring more interested buyers to your store. Even a changed hyperlink can increase web traffic.

Although testing many variables takes time, the ROI increase is more than worth it. To top it off, A/B testing is inexpensive but incredibly effective at improving conversion rates.

What Landing Page Features Should You Split Test?

Your split tests won’t be practical if you concentrate on the wrong landing page features or take an unfocused testing approach.

Here are eight features you need to A/B split test.

1. Form Length

A long form can deter ready buyers from filling them out, while a short form won’t give you enough information to stay in touch. Most forms need a person’s name, email address, and home/business address, but you can add up to 4 form fields without alienating your customers.

2. Call-to-Action Buttons

Your landing page’s call-to-action button can make or break your ability to sell. Google once tested over 40 shades of blue just to see what drove more clicks, but you don’t have to go that far. Experiment with different shapes, sizes, languages, and colors to see what sticks.

3. Content

The content on your landing page should include reviews, testimonials, and language that convinces customers to buy your product or service. You need to be clear, persuasive, and concise, but you won’t know if you completed this task correctly without multiple split tests.

4. Headlines

Headlines that include a number, “how-to,” “you,” or a question tend to receive more clicks. That’s because your readers will immediately understand what they’re about to read. Top 10 guides (like the one you’re reading) are some of the highest converting content on the web.

5. Chatbots

Conversational marketing through chatbots can increase conversions, but robotic chatbots don’t cut it. Your chatbot should eventually lead to a human consultant, but it’s okay to start with lead questions. Experiment with different prompts to see how your customers interact with the bot.

6. Personalization

Personalization tokens, like [first_name] or [location], can help you speak directly to your audience. While personalization works well for most browsers and users, it won’t work if the person hides their connection. Consider removing personalization for hidden users.

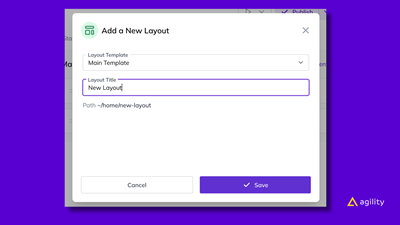

7. Layouts

User experience can also affect conversion rates. If your landing page isn’t laid out logically or your customers can’t figure out how to cash out, it’ll affect how many products or services you sell. By switching around the layout, you could reinvent your most underperforming pages.

8. Trust Marks

Trust marks add credibility to your product or service. When customers see that crucial industry player or experts endorse your company, they’ll feel more confident in their purchase. You could test how many trust markers you need to receive more conversions.

10 Tips on How to A/B Test Your Landing Pages

Like other marketing techniques, there’s a right and wrong way to create a viable A/B testing strategy.

Here are ten tips you’ll need to split test your landing pages effectively.

1. Pick a Variable and Have a Goal

You can test thousands of variables, but to evaluate the effectiveness of said variables, you’ll need to isolate one independent variable. If you don’t, you won’t know what was responsible for the change. Our last section included a bunch of variables you can use.

Next, create a goal for the test. You know you want to increase your conversion rate but by how much? If a 30% increase is enough (and obtainable), don’t stop testing until you reach that goal.

2. Build a Control and Challenger

With your independent variable, dependent variable (“increase conversion rates”), and your goal (desired outcome), you can set up an unaltered version of your testing scenario. This version is called your “control” scenario, while your altered/changed version will be your “challenger.”

Your first A/B test should be between your control and challenger because you’ll be able to see if your current landing page is working—test two challengers after establishing a baseline.

3. Split Test Groups Equally

An unequal testing group can skew the results of your test. To have conclusive results, split your testing group 50/50, regardless of how many audience types you’ll use. How you do this will vary based on your tool, but most high-quality tools will automatically split your traffic.

Remember that your control groups have to be more similar than different. For example, freelancers and startup owners can be compared, but enterprise owners are too dissimilar.

4. Only Run One Test at a Time

It’s unwise to test more than one feature or variable during a single campaign, even if the assets differ. With this strategy, it can be hard to figure out what change led to the result, which would force you to conduct the same A/B test again. By “saving” time, you’re wasting it.

For example, an A/B test campaign that looks at the best traffic sources for your landing pages would interfere with your call-to-action button A/B test campaign and complicate both results.

5. Try Out Website Analytics Tools

Technology makes everything easier. It would be impossible to quickly populate the results from your campaigns without A/B testing tools. Some tools can simplify the testing process by examining features that need improvement or offering valuable suggestions.

After your visitors engage with both page variations, you can view the results of each test. You can input your tool's data range or test frequency and automate certain features.

6. Run Test Variables Simultaneously

Timing plays a role in the success of your marketing campaigns, and this sentiment rings true for split tests. Your A/B test may show different results depending on the day, week, or month you run them. For this reason, you need to run both A/B test variables simultaneously.

There are a few exceptions to this rule. For example, if you’re testing out the best time to run a flash sale, you’ll need to run the same landing page with the same discount weeks after the first.

7. Don’t Stop Your Tests Too Soon

While there is no hard and fast rule for how long you should run an A/B test, experts say you need a minimum of 2-weeks to see results. It’s not recommended to run a test for six weeks. If your tests take longer than six weeks, you may not have enough traffic to notice a pattern.

However, the length of your test is determined mainly by the nature of your company and how you execute the test. For example, most email A/B tests see actionable results in 2 hours.

8. Get and Apply Control Group Feedback

A/B tests will help you gather quantitative data, but they won’t tell why a person prefers one landing page over another. If you can locate your users after the test, send them a poll asking why they purchased your product or why they decided to leave the landing page.

You can add this survey to your landing page after they purchase your product/service or after they leave the site. For example, a “before you go” pop-up could initiate on a “closed” tab.

9. Consider if Change is Necessary

Most A/B tests will improve or decrease your conversion rate, but not every test offers significant results. Is it worth the swap if a royal blue button receives 2% more conversions than a sky blue button? Depending on the participants, a 2% increase could turn into a 2% decrease.

For tests that present a marginal increase, consider doing the test again. When in doubt, use test results that show a rise of 10% or higher, as they’re more likely to stay consistent.

10. Never Stop Optimizing Your Pages

Optimization isn’t a one-and-done deal. As your consumers change, so do your customers. You have to run a significant amount of A/B tests on the same landing page features, month after month, to see if your content is still relevant.

Relevance isn’t the only thing that keeps you competitive. Your business could always benefit from a relevant feature that’s more optimized. Don’t settle for 40% when you can reach 70%!

Conclusion

A/B tests are one of your tool belt's most effective marketing tactics. A brilliant split testing strategy can help you understand your audience, capitalize on current industry trends, and increase your ROI. However, leading your customers to the finish line requires targeted testing.

A competent A/B test will look at eight critical features on your landing page and isolate a value. For example, you could test a 3-form field template next to a 4-form field template. Then, you must apply a few best practices, like running one test at a time and evenly splitting up test groups.

But above all, you should never stop testing. An optimized landing page requires a lot of variables, customer feedback, and analytic tools that translate your data into a usable format.

While that sounds like a lot of work, the benefits of A/B testing significantly outweigh the cons.

About the Author

Jessica Perkins is a writer and SaaS marketing consultant who helps businesses scale up their marketing efforts. She is obsessed with learning and also is passionate about sculpting.

View Related Resources

Managing Website Pages in Agility CMS

What Is Page Experience and How a CMS Can Help Boost SEO

Layout Management in Headless CMS

Omnichannel Strategy Ebook: Headless CMS

How to Deliver Omnichannel Experiences With Headless CMS

Download